The Rise of Generative AI: How Machines are Learning to Create

Generative AI refers to AI techniques that learn a representation of artifacts from data, and use it to generate brand-new, unique artifacts that resemble but don’t repeat the original data. (Gartner, 2023)

The realm of artificial intelligence (AI) has seen a revolutionary shift in recent years with the advent of Generative AI. Unlike traditional AI models that are deterministic and follow rigidly programmed rules, generative models capitalize on the latent patterns in data, learning to replicate and even creatively adapt them. This subset of AI underscores a significant paradigm shift from an AI model focused on consumption to one that centers around creativity, spawning a brave new world where machines are no longer confined to the role of passive consumers of information, but active creators of original content.

Generative AI spans a multitude of applications: crafting coherent text, composing music, designing eye-catching graphics, simulating realistic human speech, creating lifelike 3D objects, and even formulating scientific hypotheses. With its breathtaking potential, Generative AI is swiftly becoming the vanguard of AI innovation, offering exciting implications across diverse industries, ranging from entertainment and media to scientific research and development, and from customer service to cybersecurity.

The Evolution of Generative AI

The origin of Generative AI can be traced back to the humble beginnings of machine learning. These initial models were riddled with a myriad of challenges: limited computational power, lack of extensive and accessible training datasets, and the high complexity of imitating human creativity. But it was these early models, with their simple yet robust learning algorithms, that laid the firm groundwork for the spectacular evolution of Generative AI.

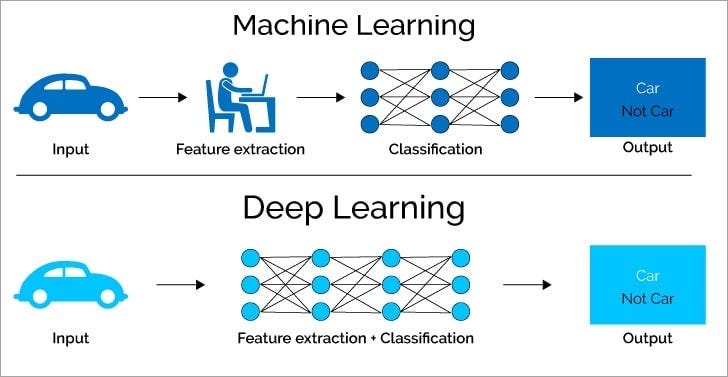

The advent of deep learning and neural networks marked a pivotal point in this evolution. Deep learning, with its ability to process vast amounts of unstructured data, became the engine driving the advancement of Generative AI. With neural networks, machines began to “think” and “learn” more like human brains, deciphering patterns, and making connections.

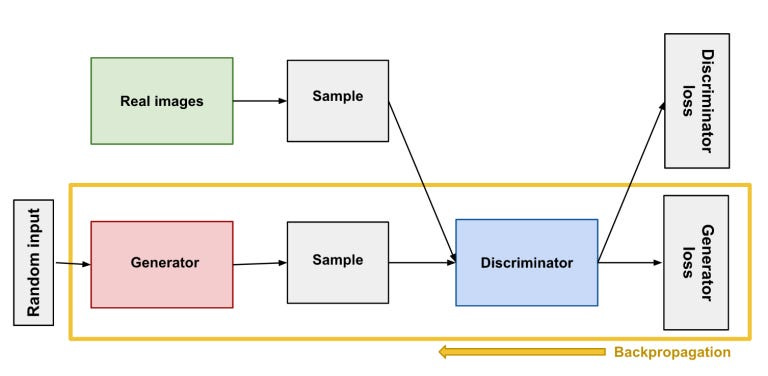

A seminal development in this journey was the creation of Generative Adversarial Networks (GANs), a class of AI algorithms used in unsupervised machine learning. GANs, introduced by Ian Goodfellow and his colleagues in 2014, are composed of two parts: a “generator” that creates the outputs and a “discriminator” that evaluates those outputs. By making these two components compete, GANs dramatically improved the quality of generative outputs.

Another critical milestone was the emergence of transformer-based models, which employ attention mechanisms to weigh the significance of different input data. This development has enhanced the ability of AI to comprehend and generate more contextually relevant and coherent content.

AI transformer models are a class of artificial intelligence architectures that have demonstrated exceptional proficiency in handling sequential data, such as natural language or images. What sets them apart is their utilization of self-attention mechanisms, a technique that allows them to capture relationships between different elements within the data, irrespective of their positions or distances apart. This capability enables the models to effectively grasp long-range dependencies and context, resulting in improved performance in various tasks, including language translation, text generation, image recognition, and many more. By employing self-attention, transformer models excel in capturing the intricate connections and patterns within sequential data, leading to impressive results in diverse domains.

These advancements, supplemented by the exponential increase in computational power and the explosion of available data, have fueled the rapid rise and sophistication of generative models.

Key Players in Generative AI

The stage of Generative AI has been graced by some formidable players whose contributions have redefined the limits of what these technologies can achieve. One of the torchbearers of Generative AI has been OpenAI’s ChatGPT. A natural language processing (NLP) model, ChatGPT has demonstrated an uncanny ability to generate human-like text.

ChatGPT utilizes an AI technique known as a transformer, which forms the basis of many large-scale language models. It’s trained using Reinforcement Learning from Human Feedback (RLHF), a process that involves a fine-tuning phase using comparison data, a dataset comprising pairs of human-generated conversations and rankings. This training methodology has allowed ChatGPT to excel in creating contextually relevant and accurate textual responses.

Now in its version 4, ChatGPT has capabilities that go beyond simple question answering and span a range of creative endeavors. It can write essays, draft emails, create poetry, devise fictional stories, and even make jokes. The range and depth of its creative prowess have made ChatGPT a compelling testament to the power of Generative AI.

Another champion in the Generative AI landscape is Google’s Brain Team’s project, Google Bard. Initially met with skepticism and challenges, Google Bard has evolved into a contender worth watching. It’s a deep learning model designed to create content akin to human artists — from painting and music composition to poetry.

Google Bard’s journey reflects the relentless pursuit of excellence in the AI field. Despite its initial hurdles, such as the difficulty in training the model due to the complexity of artistic creativity, the team persisted. The results are a model capable of generating awe-inspiring creative content that echoes human emotion and aesthetic sensibilities.

While both ChatGPT and Bard examples of Generative AI models, ChatGPT is trained using Reinforcement Learning from Human Feedback (RLHF), a process that involves a fine-tuning phase using comparison data, a dataset comprising pairs of human-generated conversations and rankings. On the other hand, Google Bard is a deep learning model designed to create content akin to human artists.

Furthermore, Bard is powered by Google’s Language Model for Dialogue Applications (LaMDA) and Google’s large language model PaLM 2. The initial version of Bard used a lightweight model version of LaMDA because it requires less computing power and could be scaled to more users. PaLM 2 is a more advanced version of PaLM which allows Bard to be much more efficient, perform at a much higher level, and fix previous issues. Both LaMDA and PaLM 2 are built on Transformer, Google’s neural network architecture that was open-sourced in 2017.

Hence, both ChatGPT (based on GPT-4) and Bard (based on LaMDA and PaLM 2) are advanced conversational AI models with their own unique features and capabilities, but they are built on different underlying technology. ChatGPT leverages the advancements made by OpenAI in the GPT series of models, while Bard utilizes Google’s own language models.

Both ChatGPT and Google Bard showcase how far Generative AI has come and hint at the untapped potential it still holds.

The Mechanics Behind Generative AI

To understand the magic behind Generative AI, one must grasp the underlying technologies — machine learning, deep learning, and neural networks. These methodologies are the gears and cogs that drive Generative AI’s engine.

Machine learning is the process where a system learns from data without being explicitly programmed. Deep learning, a subset of machine learning, utilizes artificial neural networks with multiple layers (hence, ‘deep’) to model and understand complex patterns in datasets. Neural networks are designed to mimic the human brain’s structure and function, helping the machine to ‘learn’ from the data it processes.

For example, consider a generative model trained on a dataset of songs. This model can analyze the inherent patterns and characteristics of these songs — melody, rhythm, chord progression, and more. Armed with this learned knowledge, the model can generate a completely new tune that embodies the style of the training dataset, without having a specific song programmed into it.

But what about ‘creativity’ in Generative AI? Isn’t creativity a distinctly human trait, born out of conscious thought and intent? This brings us to a fascinating aspect of Generative AI. Here, ‘creativity’ isn’t quite what we traditionally perceive it to be. It doesn’t stem from an AI’s intent or consciousness (which it doesn’t possess), but from the novel combinations and interpretations it generates based on learned patterns.

Essentially, Generative AI creates outputs that humans deem ‘creative’ because they align with our understanding of novelty, coherence, and aesthetic appeal. So, while Generative AI is not ‘creative’ in the human sense, it exhibits a form of statistical creativity — the ability to craft outputs that are both novel and pleasing based on learned data patterns. We are still far from “artificial general intelligence” (AGI), a highly autonomous and versatile AI systems capable of performing a wide range of intellectual tasks at a level equal to or surpassing human intelligence, although AGI is getting closer and closer.

Transformative Junction in AI

We stand at a transformative junction in the world of AI, with Generative AI leading the vanguard. From drafting cogent pieces of text to composing melodies that tug at our heartstrings, Generative AI is showing us that machines can indeed venture into domains once reserved for human cognition and creativity.

ChatGPT and Google Bard are but the tip of the iceberg. As our computational resources grow and our understanding of deep learning algorithms deepens, we can anticipate an explosion of new applications and improvements in Generative AI.

Yet, in this brave new world, we must not overlook the significance of ethics. AI, in its many forms, wields significant power. As such, it’s incumbent upon us — the architects of these technologies — to ensure they are employed responsibly and to the benefit of all. From preventing bias in AI outputs to addressing concerns of job displacement, there’s a myriad of ethical implications that need careful consideration and preemptive action.

But let’s not end on a solemn note. After all, we’re witnessing an incredible phenomenon — machines learning to create. It’s a testament to human ingenuity and a glimpse into a future where AI could be our partner in creativity, rather than just a tool.

As we continue to delve into this enthralling world of AI, my next blog will explore another aspect of generative AI — its applications in a variety of industries.

>>> Follow on Twitter, LinkedIn and Instagram for more AI-related content.